The website tested before launch was fast. It was published and started attracting visitors. And suddenly an optimizer stated that Google had criticised the website for allegedly opening slowly.

Table of Contents

Visitors leave the website, tired of waiting

Google states: a user waits up to 3 seconds for a page to load. Therefore, search engines give preferential treatment to websites that load quickly.

A poll from Unbounce showed how important loading speed is to online shoppers when visiting an online shop. 45% are inclined to cancel the purchase from a slow retailer and 36% will never return to it.

Web page loading speed is evaluated by Google PageSpeed Insights (PSI), Pingdom, GTmetrix, WebPageTest, and Sitespeed. Let’s analyse it using PSI as an example.

The service analyses content and reports on the performance of a page with a list of issues, optimization recommendations, and an estimation of the loading time the page could save if the suggestions were implemented. A page’s performance is scored as follows: a score of 90 or above indicates high speed, 50 to 90 indicates medium speed, and below 50 is considered poor.

Even without PSI reports or a point-system, one can tell when a site performs at a snail’s pace. Here is another situation: the site opens instantly for developers, but barely works for users due to home devices and networks being weaker than those at the programmer’s office.

Speeding up the website requires optimization

Now it’s necessary to understand who to contact and what to expect as a result. If the programmers are full-time workers, then whoever wrote the code will optimize it. And if the staff can’t handle it or are busy, or the site is maintained by outsourcing, then you need to look for someone.

Some actions, like compressing images, can be done by yourself or delegated to a SEO or an administrator. The rest of the tasks will require people who can read and write code.

Let’s see what front-end and back-end developers will do.

Optimizing code and content

The front-end developer won’t touch the texts but will instead concentrate on the design. He/she will replace heavy fonts with light ones and reduce their amount up to three.

The videos will be spread over different pages to avoid cluttering them together. Videos from the top of the page will be moved down.

The front-end developer will pay attention to images by compressing banners, backgrounds, icons, and illustrations. He/she will convert PNG to JPEG; will suggest replacing animation, slides, and special effects for static media. He/she will switch to lazy loading — browser loading low resolution images as so-called ‘placeholders’ before the original high resolution images are actually loaded. If multiple screens are displayed using vertical scrolling, images will be loaded when the user accesses the part of the page that requires it.

The front-end developer will find and remove unused JavaScript and CSS Code; will minify and combine what is left. He/she will also detect third-party code like widgets, scripts, and frames slowing down the process. It could be embedded social media posts, videos, and counters. Code that cannot be removed would be put at the bottom of the page or dealt with by using asynchronous or lazy loading.

Rationalisation of the mobile version

It would make sense to hide some functions and decor, leaving only the essentials.

Google approached this issue in style. It trained a deep neural network on a data set about bounces and conversions on smartphones. The accuracy of the predictions is 90%. The study showed that the bounce rates steadily increased as the page loading time increased: when the page loading time went from 1 to 3 sec, the probability of bounce increased 32%, and went up to 106% for 6 sec. When the page loading time reached 10 seconds, the probability of bounce increased 123%.

The Unbounce poll mentioned above revealed an interesting fact: Android users are more patient than ones committed to iOS. 61% of Android users are willing to wait up to 13 seconds, while 64% of iOS users wait just 3 seconds. So if your target audience has iOS, the mobile version requires close attention.

Optimizing back end

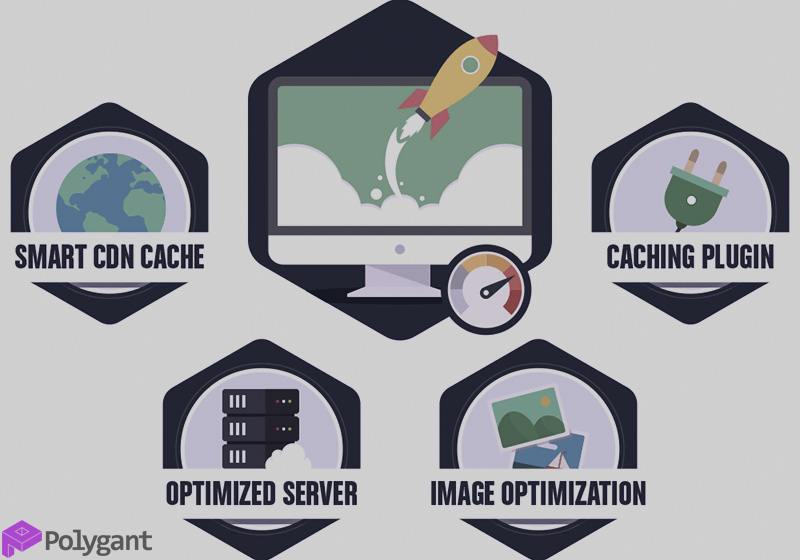

To fasten the server-side, the back-end developer:

- Configures caching and compression

- Optimizes the database

- Reduces the number of requests

- Optimizes the server code and system resources

- Minimises redirects.

The list is shorter than for the front end, but it is more complex.

Hardware setup

Let’s say your clients are in Western Europe and your server is in America where server rental is cheaper. The further the user is from the server, the slower the site loads for them. In this case, you will need a CDN (content delivery network) that will distribute content to every single user from the closest server to their location.

PSI score accuracy

It is an unattainable dream to score 100. Testing search engines shows that google.com scores 80 and yahoo.com scores 89.

Monitoring the same URL at different times of the day changes the score. The explanation is non-permanent network bandwidth. At night, while everyone is asleep, the networks are not busy and work swiftly.

With its scoring-system, PSI provides both lab and field data about a site. Lab data is collected using a load simulation. Field data is statistics of real users, the metrics of which are taken from reports of the Chrome browser.

Both types of data have flaws. The simulation doesn’t capture real-world bottlenecks since it runs using just one device and the same network. Field data doesn’t have this disadvantage, but it doesn’t evaluate all load parameters.

Getting a moderate score doesn’t mean the product is mediocre. The quality is evaluated not just by one, but by a set of metrics. And the most important thing is that the site should be attractive for people and not for measuring systems.